OR Driven Digital Transformation

Digital Transformation · Strategy · March 2026

Fix What's Actually Broken

The manufacturing sector solved this problem fifty years ago. Banks are still paying consultants to learn it the hard way. An Operations Research approach to digital transformation; instrument, find the bottleneck, fix it, repeat.

There is a pattern so common in financial services that it has become almost ritual. A bank identifies its digital lending experience is lagging. The Board approves a multimillion-dollar programme. Consultants arrive. A requirements document blooms to several hundred pages. Eighteen months later something launches, over budget, past deadline, and built against a landscape that has already moved on.

The irony is that the manufacturing industry solved this problem fifty years ago. They just called it something different.

The factory floor insight

Operations Research emerged from World War II logistics. By the 1980s Toyota had operationalised it into the Toyota Production System, and Eliyahu Goldratt had formalised it as the Theory of Constraints. The central insight: a system's throughput is determined by its bottleneck, not its average performance.

You can invest millions optimising every other station on a production line. If one station can only process 200 units an hour, you will never ship 201. Work-in-progress simply piles up upstream, invisible until the inventory overflows.

Banks have production lines too. A loan origination journey is a sequence: awareness → inquiry → application → documents → verification → decision → approval → settlement. At every transition, volume drops. Some of that is legitimate. Much of it is invisible friction — a form that times out, a document checklist that is ambiguous, a decision that takes four days when the applicant expected four minutes.

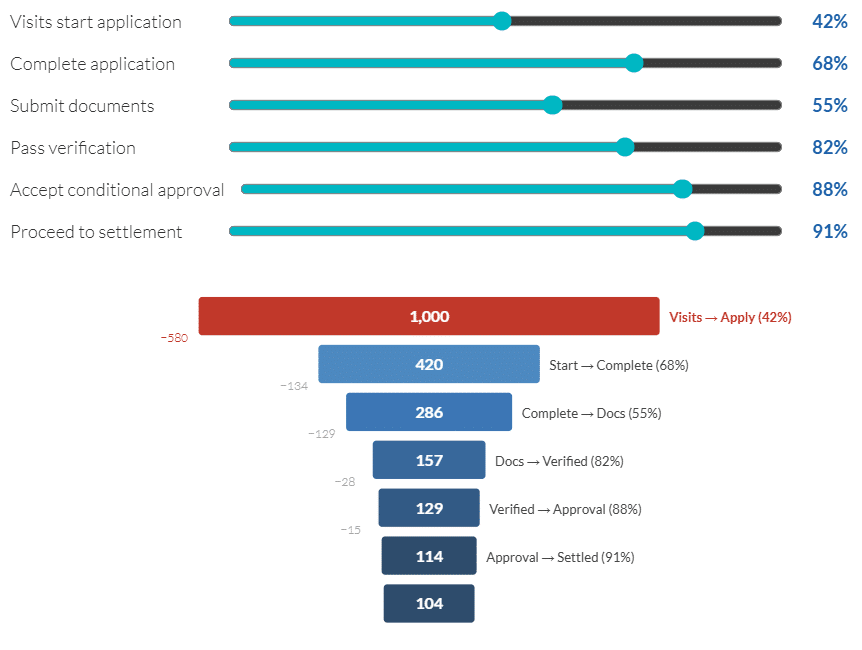

Adjust the sliders to model your funnel. The highlighted red stage is your current constraint — the biggest source of volume loss.

Your constraint is where you should invest first — not where looks most impressive to the Board.

The analytics prerequisite

You cannot find a bottleneck you cannot see. This is where most transformation programmes fail before they begin: they leap to solutions - new platforms, new apps, new interfaces- without first instrumenting the existing process to understand where the losses actually occur.

In manufacturing, this is called value stream mapping. You follow a unit of production from raw material to shipped product and document every step, every wait time, every rework loop. The map rarely produces surprises about what the problem is. It almost always produces surprises about where it is.

Each circle is a loan application moving through your pipeline. Drag the capacity sliders to create or remove bottlenecks and watch what happens to flow, queue depth, and throughput.

Notice how fixing a downstream stage while the real constraint is upstream does almost nothing to throughput.

Small fixes, compounding returns

Once you can see the funnel, the constraint-based approach becomes straightforward: find the biggest source of leakage, understand it, fix it, measure the improvement, move to the next one. This is not a new idea — it is Agile methodology applied through an Operations Research lens.

The difference from conventional Agile in digital transformation is the rigour of the diagnostic step. Too many Agile programmes in banking are Agile in delivery cadence but still based on imagined requirements — features that someone believes will improve conversion, without having measured what is actually causing the drop.

- Instrument before you buildDeploy analytics across every step of the existing customer journey — however clunky it is. You need a baseline before you can claim an improvement.

- Map the value streamDocument every handoff, every wait state, every manual step. Calculate where volume is lost and time is consumed. This map is your transformation backlog, ranked by impact.

- Attack the constraint, not the wish listFix the biggest bottleneck first — even if unglamorous. A better document checklist email might outperform a new mobile app if collection is where 40% of applicants drop off.

- Measure, confirm, move onValidate the fix worked. Quantify the improvement. Rerun your funnel analysis to find where the constraint has moved. Repeat.

- Build platforms only when constraints demand themSometimes the bottleneck genuinely requires new infrastructure. Often it does not. Know the difference before committing the budget.

Click through each sprint cycle to see how fixing one constraint at a time compounds. Compare this to the big-bang approach: same total investment, delivered all at once.

Sprint 1 of 5 — Start: 100 loans/month through to settlement

Why big bang projects keep failing

The appeal of the comprehensive transformation programme is understandable. It is tidy. It has a defined scope, a defined budget, a defined go-live date, a compelling PowerPoint. It gives everyone a shared narrative. It feels like leadership.

The problem is that it commits enormous resources to solving a problem that has not been precisely diagnosed. Requirements are built from workshops and interviews — both systematically biased toward people's beliefs about where problems exist, rather than evidence of where they actually are. By the time the platform launches, the business has changed and the team has spent two years not improving anything.

The integration question as a worked example

Consider core banking integration, a decision that comes up in almost every digital lending programme. The conventional project approach mandates full, automated, real-time integration from day one. This is the "right" architecture. It is also expensive, time-consuming, and often the reason projects stall for months.

An OR-informed approach asks a different question: where in the current process is manual data entry between origination and core actually causing material problems? Is it causing errors? Causing delay? Is it a bottleneck — or is it a once-a-day, five-minute task that costs $60,000 a year to solve by hiring somone or doers it justify an automation project cost of $300,000?

If the real bottleneck is upstream ,customer abandonment during application, slow document turnaround, a decision process that takes days, then spending the integration budget on funnel analytics and a faster front-end experience may deliver ten times the return. The manual handoff to core can be automated later, once upstream constraints are resolved and volume justifies the investment.

This is not a counsel of mediocrity. It is a counsel of sequencing.

A case study hiding in plain sight: a Big 4 bank's home lending hub

One of the most instructive examples of this tension, excellent diagnosis, big-bang response, comes from a Big 4 Australian bank's home lending transformation. The programme was anchored on a striking statistic: only 6% of customers who begin the home loan origination process end up funding a loan, leaving an estimated $47 billion sitting in the pipeline. That number is not a brand problem or a product problem. It is a throughput problem with a specific location.

The diagnosis was precise and correct. Document collection requiring more than fifteen items per application. A digital-to-lender handover that haemorrhaged leads. Channels that treated returning customers as unknown to the bank. These are identifiable constraints with specific locations in the value stream, exactly the kind of diagnostic that should drive a sequenced delivery model.

The platform response, a four-state, four-capability platform, was a compelling product vision. But building all four capability layers across all four customer states and sixteen parallel product streams was a lot to chew off with many dependencioes adn risks. The OR question is not whether a new customer portal is the right destination. It is whether the delivery sequencing matches the urgency of the constraint it is trying to solve.

A 94% funnel loss means the seeker-to-application conversion problem and the document collection bottleneck should be in production, measured, and improving before the property insights dashboard ships, not because the latter is less valuable, but because throughput compounds. Fixing what is upstream first gives you more volume to benefit from every downstream improvement and unliocks capital for further work.

This is not a criticism of the vision. It is the most common failure mode in large-scale digital transformation: a precise diagnosis followed by a response that hedges across the entire problem space rather than concentrating force on the identified constraint. Toyota did not become the world's most efficient automaker by promising a better car. They did it by eliminating one defect, in one production line, at a time.

When the constraint is the system itself

There is an important caveat to everything above. The OR approach — instrument, identify the bottleneck, fix it, repeat — assumes you have something to instrument and something to fix. If your current origination process is a monolith or is largely manual, that assumption breaks down. You cannot tune a system that has no tunable parts.

If loan applications travel through a single legacy platform with no API surface, no event logging, and no way to isolate one stage from another, then fixing the document collection step without touching everything else is not an option. The constraint is not a stage in the process. The constraint is the architecture. In that case, you may have no choice but to trigger a Loan Origination Transformation — a genuine platform replacement.

But even here, the OR discipline matters — perhaps more than ever. Because "we need to replace the origination platform" is not the same as "we need to replace the loan management system." And neither of those is the same as "we need a full core integration project." These are three different scopes, three different costs, and three different timelines. Conflating them is where big-bang risk re-enters through the back door.

And when you do choose an architecture, choose one that makes the OR approach possible going forward. A composable architecture — modular, API-first, with discrete services that can be replaced or upgraded independently — is not just a technical preference. It is what allows you to treat transformation as a continuous process of constraint removal rather than a series of high-stakes, high-cost replacement cycles. The ability to fix the document stage without touching decisioning. To upgrade the broker portal without rewriting the identity layer. To instrument one step without instrumenting everything.

The monolith forces the big bang. The composable architecture makes it unnecessary. If you are forced into a transformation, use it as the opportunity to build the system that will never force you into that position again.

Where to start

The entry point is always the same: measure the current state. Not what you believe it is — what the data says it is. If you do not have the instrumentation to measure your origination funnel end-to-end, that is your first project. Everything else follows.

It is unglamorous work. It does not make for a compelling conference presentation. But it is the work that actually changes outcomes — and it can begin this quarter, not after the next planning cycle, not after the next budget approval, and not after another eighteen months of requirements gathering.

The factory figured this out a long time ago. It is time the bank caught up.

Moroku builds digital origination tools designed around funnel visibility, constraint identification, and modular deployment — so you can start fixing what's actually broken.

Explore Moroku Flow → | Lending Analytics →